The Vision of Invisible Intelligence

The most profound technologies are those that disappear. They weave themselves into the fabric of everyday life until they are indistinguishable from it. This is the promise of ambient computing—environments saturated with intelligence that anticipate needs, respond to presence, and fade into the background when not required.

Mark Weiser, chief scientist at Xerox PARC, articulated this vision in 1991: “The most profound technologies are those that disappear. They weave themselves into the fabric of everyday life until they are indistinguishable from it.” Three decades later, that vision is finally becoming tangible. But the path from vision to reality runs through hardware—specifically, through the architectures that fuse data from diverse sensors into coherent understanding of human context.

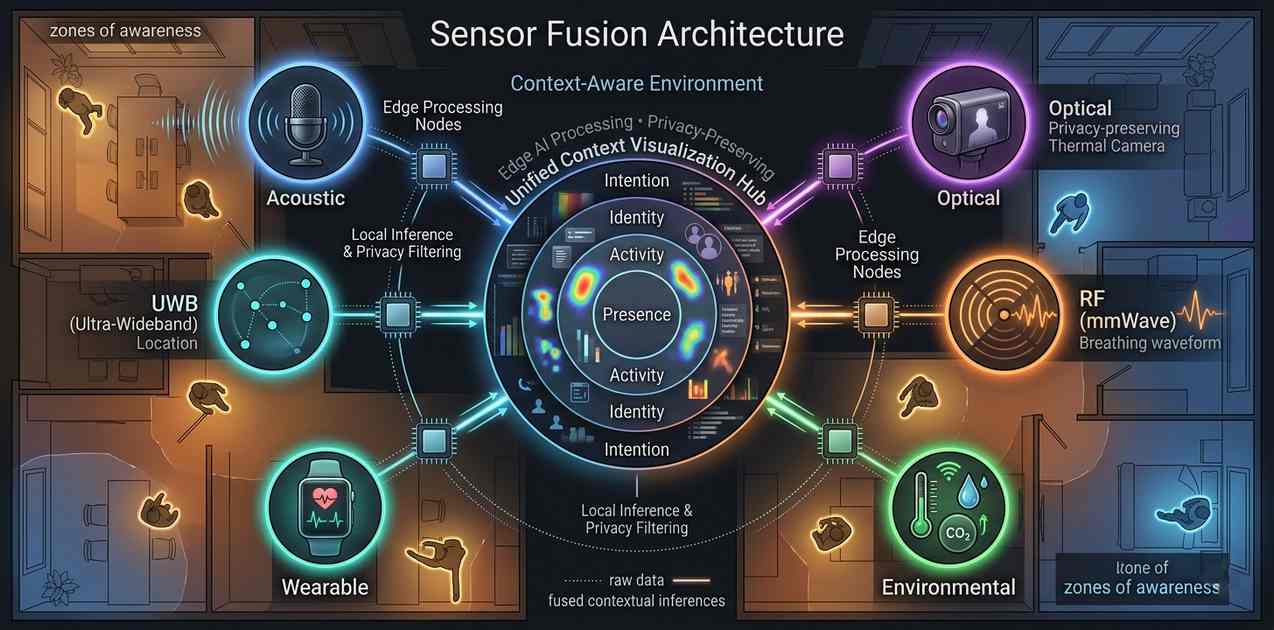

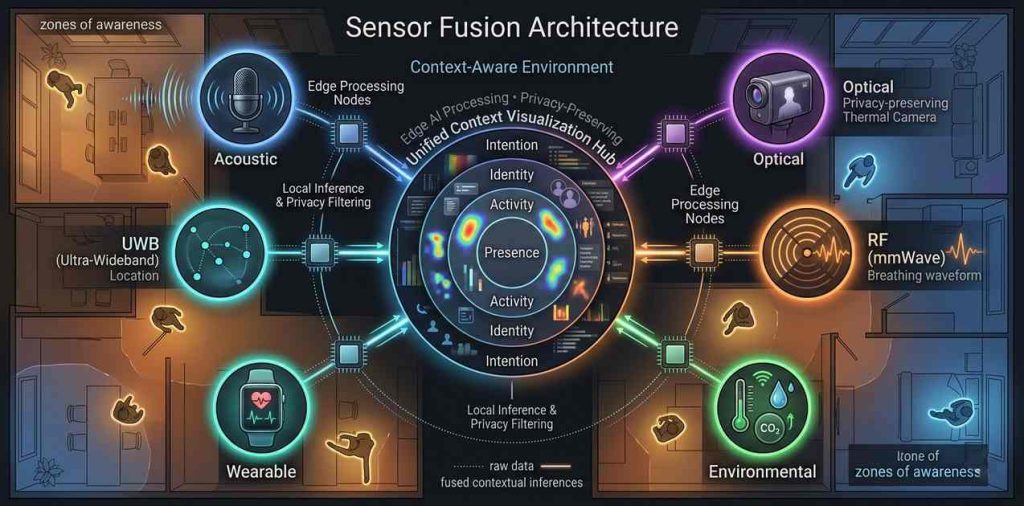

Ambient computing environments do not rely on any single sensor or device. They depend on sensor fusion architectures—systems that combine data from multiple modalities to infer human activity, presence, intention, and need. These architectures must process data in real-time, respect privacy boundaries, operate within strict power constraints, and deliver intelligence without demanding explicit user attention. The hardware challenges are immense. The solutions are reshaping how we think about computing itself.

Defining Ambient Computing: Beyond IoT

Ambient computing is often conflated with the Internet of Things (IoT), but the distinction is critical. IoT connects devices to the internet; ambient computing makes those devices contextually intelligent. An IoT-enabled thermostat can be controlled from a smartphone. An ambient computing thermostat learns occupancy patterns, adjusts based on time of day, integrates with calendar data, and modulates temperature before the occupant even realizes discomfort.

The difference is context awareness—the ability to understand the human situation and respond appropriately without explicit commands. Context encompasses location, activity, identity, time, environmental conditions, social setting, and even emotional state. Inferring this from raw sensor data is the fundamental challenge of ambient computing hardware.

Mark Weiser articulated this vision at Xerox PARC in 1991, describing a future where computing is “invisible, everywhere, and always available.” The hardware to realize that vision is only now reaching maturity.

The Sensor Fusion Hierarchy

Sensor fusion in ambient computing operates across multiple levels of abstraction, from raw data to semantic understanding.

Level 1: Data-Level Fusion

At the lowest level, raw sensor data is combined to improve signal quality and reduce uncertainty. A presence detection system might fuse passive infrared (PIR) data with ultrasonic distance measurements to eliminate false triggers from pets or moving curtains. Time-of-flight sensors combine with thermal imaging to distinguish human presence from other heat sources. This level operates on milliseconds, requiring low-latency processing close to the sensors.

Level 2: Feature-Level Fusion

At the intermediate level, extracted features from multiple sensors are combined to infer specific attributes. Acoustic features (voice activity, sound signatures) fuse with motion features (gait, gesture) to identify individual users. Facial recognition from cameras combines with voice recognition from microphones to verify identity with higher confidence than either modality alone. This level operates on sub-second to second timescales, typically handled by edge AI processors.

Level 3: Decision-Level Fusion

At the highest level, inferences from multiple modalities combine to determine context and trigger actions. Occupancy detection (from PIR, cameras, acoustic sensors) fuses with activity recognition (from wearables, environmental sensors) to determine appropriate responses: lighting adjustment, climate control, audio routing, notification filtering. This level operates on seconds to minutes, often involving distributed processing across edge and cloud resources.

The Sensor Modality Landscape

Context-aware environments draw on an expanding palette of sensor types, each capturing different dimensions of human activity.

Acoustic Sensors

Microphones capture the richest single stream of contextual information. Voice commands, conversation patterns, ambient noise levels, and acoustic events (door slams, glass breaking, appliance sounds) all provide contextual signals. Modern acoustic sensor arrays combine multiple microphones for beamforming, source separation, and localization. The challenge is balancing utility with privacy—processing audio locally to extract features without transmitting raw speech to the cloud.

Optical Sensors

Cameras provide unparalleled detail about human activity, position, and identity. But they also raise the most significant privacy concerns. Ambient computing increasingly relies on privacy-preserving optical sensing—thermal cameras that detect presence without identifying features, low-resolution imagers that capture posture but not facial details, and event-based cameras that transmit only pixel changes rather than full frames.

Radio Frequency Sensors

RF sensing has emerged as a privacy-preserving alternative to cameras. Wi-Fi sensing uses channel state information (CSI) to detect presence, movement, and even breathing patterns. Radar sensors—operating in mmWave bands—provide precise location tracking, gesture recognition, and vital sign monitoring without capturing identifiable imagery. The 60 GHz and 77 GHz bands have become particularly important for ambient sensing, offering centimeter-level resolution with minimal privacy intrusion.

Environmental Sensors

Temperature, humidity, air quality, light levels, and barometric pressure provide essential context for environmental response. CO₂ sensors, in particular, have become critical for occupancy estimation in commercial buildings, correlating strongly with human presence and ventilation needs. These sensors are low-bandwidth and power-efficient, making them ideal for battery-powered deployments.

Wearable and Body Sensors

The boundary between ambient computing and wearable computing is increasingly porous. Smartwatches, fitness trackers, and medical wearables provide biometric data—heart rate, skin conductance, movement—that enriches ambient context. When a user approaches their smart home, their wearable can signal identity and physiological state, enabling personalized environmental response without cameras or explicit interaction.

Fusion Architectures: Distributed to Centralized

Sensor fusion architectures exist on a spectrum from fully distributed to fully centralized, with trade-offs in latency, bandwidth, privacy, and reliability.

Edge-Only Fusion

In edge-only architectures, all sensor fusion occurs on local devices. A smart speaker processes audio locally to detect wake words and voice commands. A smart thermostat integrates its own temperature sensors with occupancy detection to adjust settings. The advantage is privacy—sensitive data never leaves the device. The limitation is that context is siloed—the speaker doesn’t know what the thermostat knows, and the thermostat doesn’t know what the lights know.

Gateway-Based Fusion

Gateway architectures aggregate data from multiple sensors at a local hub—a smart home controller, a set-top box, or an edge server. The gateway performs cross-sensor fusion, combining data from acoustic, optical, and environmental sensors to build a unified context model. This approach balances local processing with system-level intelligence. Products like Apple’s HomePod, Amazon’s Echo Hub, and Google’s Nest Hub exemplify this architecture, though their fusion capabilities remain relatively basic compared to the full ambient computing vision.

Cloud-Enhanced Fusion

Cloud architectures offload complex fusion algorithms to remote servers, enabling more sophisticated inference at the cost of latency and privacy. Cloud fusion excels at long-term pattern learning—understanding occupancy schedules, activity routines, and environmental preferences across days and weeks. Hybrid approaches process time-sensitive fusion at the edge while offloading model training and long-term pattern recognition to the cloud.

Distributed Intelligence

Emerging architectures distribute intelligence across the sensor network itself. Each node performs local processing and communicates only relevant features or events to neighbors, with context emerging from network-wide coordination. This approach, sometimes called “fog computing” or “edge AI,” promises the best combination of privacy, latency, and reliability. Implementation requires sophisticated algorithms for distributed inference and consensus, areas of active research.

Edge AI Processors for Sensor Fusion

The computational demands of real-time sensor fusion have driven a new class of edge AI processors designed specifically for multimodal inference.

Dedicated NPUs for Sensor Fusion

Neural processing units optimized for sensor fusion combine multiple input modalities within a single inference pipeline. The Syntiant NDP200 exemplifies this approach, integrating a neural decision processor with audio, motion, and environmental sensor interfaces. It can simultaneously process voice commands, detect acoustic events, and classify activity from accelerometer data while consuming under 1 mW. For ambient computing, this enables always-on context awareness without battery drain.

Multi-Core Fusion Processors

More complex ambient environments require processors that can handle diverse workloads: DSPs for audio processing, NPUs for neural inference, and general-purpose cores for system coordination. The Ambiq Apollo4 Plus integrates a Cortex-M4 core with a dedicated DSP and GPU for sensor fusion workloads. Its subthreshold power-optimized technology enables months of operation on coin-cell batteries—essential for distributed sensor networks.

Always-On Sensor Hubs

The most sophisticated ambient systems dedicate entire processors to continuous sensor fusion. NXP’s i.MX RT1170 includes a 1 GHz Cortex-M7 for high-performance fusion and a 400 MHz Cortex-M4 for always-on background processing. The always-on core continuously monitors low-power sensors—PIR, accelerometer, microphone—and wakes the high-performance core only when significant events occur. This hierarchical processing enables ambient awareness with sub-milliwatt average power consumption.

Privacy-Preserving Fusion

The greatest barrier to ambient computing adoption is not technical capability but privacy concern. Sensor fusion architectures must balance contextual intelligence with individual privacy.

On-Device Processing

Processing sensitive data on the device rather than transmitting it to the cloud is the first line of defense. Modern edge AI processors can perform sophisticated inference—face detection, voice recognition, activity classification—entirely locally. The Google Coral platform provides TPU acceleration for on-device inference, enabling privacy-preserving ambient intelligence without cloud dependency.

Differential Privacy

For systems that require cloud connectivity, differential privacy techniques add calibrated noise to transmitted data, preventing individual identification while preserving aggregate utility. Smart home data aggregated across neighborhoods can optimize grid operations without exposing individual consumption patterns.

Homomorphic Encryption

Emerging hardware support for homomorphic encryption enables computation on encrypted data—the cloud can process sensor data without ever decrypting it. While currently computationally expensive, dedicated accelerators for homomorphic encryption are beginning to appear, promising a future where ambient intelligence operates on data that remains cryptographically private.

User Control and Transparency

Technical privacy protections must be complemented by user control. Ambient computing systems require clear indicators of when sensors are active, granular control over which data is shared, and intuitive mechanisms for temporary privacy—a “privacy mode” that suspends sensing without disabling functionality entirely.

Applications: Where Ambient Computing Is Emerging

Smart Homes

The residential market represents the most visible ambient computing deployment. Voice assistants have become the primary interface, but true ambient intelligence remains limited. Advanced systems—like Josh.ai and high-end Crestron installations—demonstrate what’s possible: rooms that know who is present, adjust lighting and temperature to individual preferences, route audio to follow the user, and anticipate needs based on time of day and activity. The hardware exists; the challenge is cost and integration complexity.

Smart Buildings

Commercial buildings have embraced ambient computing for energy efficiency and occupant experience. Sensor networks detect occupancy to optimize HVAC and lighting, reducing energy consumption by 30-50% compared to scheduled operation. Workspace management systems track desk utilization, enabling hot-desking and space optimization. The Enlighted platform (now Siemens) deploys sensor networks that combine occupancy detection, environmental monitoring, and lighting control in a single integrated system.

Healthcare and Senior Living

Ambient computing offers transformative potential for healthcare—particularly for aging in place. Sensor networks can detect falls, monitor activity patterns for signs of decline, track medication adherence, and alert caregivers to anomalies. The CarePredict platform uses wearable sensors combined with environmental monitoring to detect changes in activity, sleep, and behavior that may indicate emerging health issues. Privacy-preserving radar sensors from Vayyar and Socionext detect falls and monitor vital signs without cameras, addressing the privacy concerns that have limited adoption.

Hospitality and Retail

Hotels and retail environments are deploying ambient intelligence to personalize experiences. Hotel rooms that recognize returning guests and adjust lighting, temperature, and entertainment preferences. Retail spaces that track customer flow, optimize staffing, and personalize digital signage. The Amazon Just Walk Out technology represents ambient retail’s frontier—stores where sensors track customer selections and automatically charge their account, eliminating checkout entirely.

The Integration Challenge: Why Ambient Computing Remains Fragmented

Despite technological maturity, ambient computing remains fragmented. Smart home devices from different manufacturers rarely interoperate. Context captured by one system is inaccessible to others. Users must manage multiple apps, multiple accounts, and multiple voice assistants.

The root cause is the absence of standardized context models—common frameworks for representing and sharing contextual information across devices and platforms. Initiatives like Matter (for device connectivity) and OLA (Open Lighting Architecture) address basic interoperability but don’t extend to the contextual intelligence layer. The Home Assistant open-source platform demonstrates what’s possible when diverse devices share a common context model, but it remains a hobbyist solution.

Industry leaders recognize the problem. Apple’s HomeKit, Google’s Home, and Amazon’s Alexa all aspire to be the unifying ambient platform. But walled gardens limit the vision—users must choose an ecosystem and accept its limitations.

Future Directions: The Next Decade of Ambient Computing

Generative AI for Context Understanding

Large language models are beginning to transform ambient computing’s intelligence layer. Rather than hard-coded rules (“if occupancy detected and after sunset, turn on lights”), generative models can interpret natural language requests and adapt to ambiguous situations. A user saying “it’s a bit bright in here” can trigger contextually appropriate responses based on time of day, activity, and individual preferences—without programming specific thresholds.

Neuromorphic Sensing

Traditional sensors capture continuous streams of data, much of which contains no relevant information. Neuromorphic sensors—event-based cameras, spiking neural network accelerators—capture only changes in the scene, dramatically reducing data volume and power consumption. Prophesee and Sony have commercialized event-based vision sensors that consume orders of magnitude less power than conventional cameras while providing microsecond latency. For ambient computing, this enables continuous visual awareness without the power penalty of traditional cameras.

Ultra-Wideband for Spatial Context

Ultra-wideband (UWB) technology is transforming spatial awareness in ambient environments. Unlike Bluetooth, UWB provides centimeter-precise distance and angle measurement, enabling systems to know not just that a user is present but exactly where they are and where they are facing. Apple’s U1 chip and the FiRa consortium standard are driving adoption. UWB-enabled ambient systems can route audio to the speaker nearest the user, adjust lighting to follow their position, and unlock doors as they approach—contextual responses that feel almost telepathic.

Energy Harvesting Sensors

The vision of pervasive ambient sensing founders on the reality of battery replacement. Energy harvesting—capturing ambient light, thermal gradients, radio frequency energy, or mechanical vibration—enables sensors that operate indefinitely without maintenance. E-peas and ON Semiconductor have developed power management ICs that efficiently harvest energy from diverse sources, enabling truly set-and-forget sensor deployments.

Federated Learning for Privacy

Federated learning trains ambient intelligence models across distributed devices without centralizing sensitive data. Each device trains locally on its own sensor data, sharing only model updates (not raw data) with a central coordinator. The coordinator aggregates updates into a global model, which is then redistributed. This architecture enables ambient systems that improve over time while maintaining strict privacy boundaries. Google’s Federated Learning platform has demonstrated the approach for keyboard prediction; similar techniques are being adapted for ambient intelligence.

Conclusion: Computing That Disappears

The hardware for ambient computing has arrived. Edge AI processors enable sophisticated sensor fusion at milliwatt power levels. Privacy-preserving sensing modalities capture context without compromising personal boundaries. Standardized connectivity protocols are slowly breaking down silos. What remains is the integration—building systems that combine these capabilities into coherent, trustworthy environments.

Mark Weiser’s vision of invisible computing was not about technology’s absence but its integration so complete that attention is freed for what matters. The ambient computing hardware of today—sensor fusion architectures that understand presence, anticipate needs, and respond appropriately—represents the foundation of that integration.

The technology is not yet invisible. It remains visible in the sensors on ceilings, the hubs on shelves, the complexity of setup and maintenance. But the trajectory is clear. Each generation of hardware reduces friction, improves intelligence, and fades further into the background. The ultimate ambient computer is not a device at all—it is the environment itself, aware and responsive, yet never demanding attention. That future is no longer science fiction. It is being built, sensor by sensor, algorithm by algorithm, right now.