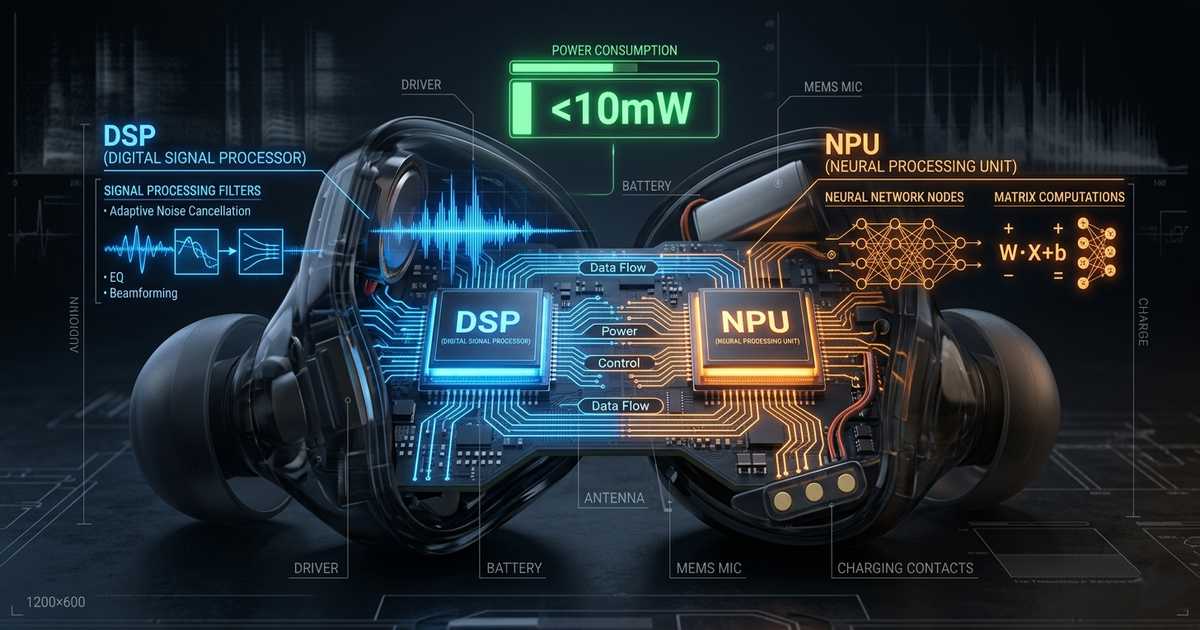

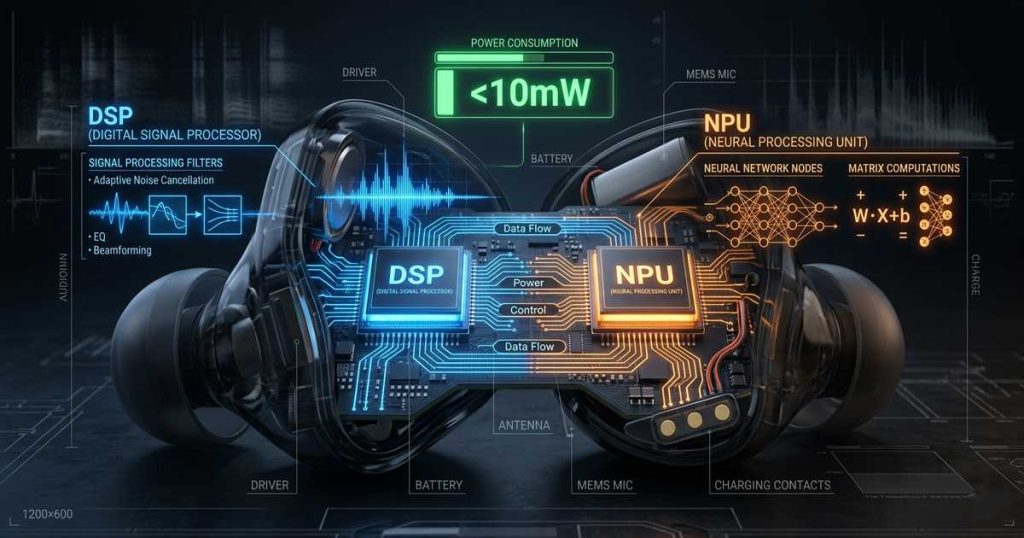

The conventional wisdom has long held that deploying continuous, real-time speech AI on battery-constrained wireless hearables is nearly impossible. Streaming deep learning models demand constant audio processing, imposing strict computational and I/O constraints that seem incompatible with the milligram-scale batteries inside true wireless earbuds. Yet 2025 marks an inflection point. Through sophisticated DSP + NPU co-design and hardware-software optimization, the industry is now achieving always-on intelligent assistants that consume less than 10 milliwatts — ushering in a new generation of truly autonomous hearables.

⚡ The Challenge: Always-On AI in a Power-Constrained World

For hearables like wireless earbuds and smart glasses, “always-on” functionality means continuously processing audio streams to detect wake words, suppress noise, or enhance speech — all while sipping microamps of current. Traditional architectures struggle here. Running neural networks on a general-purpose CPU or even a DSP alone often pushes power consumption beyond acceptable limits, draining tiny batteries in hours rather than days.

🔄 The Workload Diversity Problem

The fundamental tension lies in workload diversity. Speech AI pipelines combine:

- Signal processing tasks — filtering, beamforming, echo cancellation

- Neural network inference — keyword spotting, noise suppression

Each demands different computational strengths:

- 🎯 DSPs excel at deterministic signal processing with minimal latency

- 🧠 NPUs (Neural Processing Units) deliver orders-of-magnitude higher efficiency for matrix-heavy neural computations

The magic happens when they work in concert.

🔧 The Co-Design Breakthrough: Letting Specialists Do What They Do Best

Recent silicon innovations reveal a clear architectural pattern: heterogeneous compute clusters where DSP and NPU operate as peers under intelligent power management. This co-design approach assigns tasks based on natural affinity, then dynamically powers domains only when needed.

🏭 Actions Technology ATS323X Series

Unveiled in late 2024, this tri-core heterogeneous SoC integrates a HiFi5 DSP with an AI NPU using Mixed-mode SRAM-based Computation-in-Memory (MMSCIM) technology. The results are staggering:

- 📈 60× improvement in energy efficiency compared to DSP-only AI workloads

- ⚡ >90% power reduction

- 💪 100 GOPS of compute

🎵 Alif Semiconductor Balletto Family

The industry’s first BLE wireless MCU with integrated DSP and NPU combines:

- Arm Cortex-M55 core with Helium vector extensions — 500% DSP improvement

- Dedicated Ethos-U55 NPU — 46 GOPS

- aiPM technology — 700 nA stop-mode current

🎯 Reaching the Sub-10 mW Milestone

The “under 10 mW” threshold represents a critical inflection point for always-on hearables. At this power envelope, continuous speech AI becomes practical within the thermal and battery constraints of in-ear devices.

🔥 AONDevices AON1120: Pushing the Floor

Announced at CES 2025, this sensor fusion IC integrates a dual-NPU architecture with a hardware DSP and RISC-V core in a compact 40QFN package:

- ⚡ <260 µW — full processing mode (sound classification + wake-word recognition + voice commands)

- 👂 <80 µW — listening mode

- 🎙️ Detects over a dozen sound classes while performing keyword spotting simultaneously

📱 NXP i.MX RT700: Domain-Specific Power Management

Designed for AI glasses and hearables, this chip partitions the system into functional domains:

- 🎤 Hardware Voice Activity Detector (HWVAD) in PDM microphone interface — listens continuously at near-zero power

- 💤 Only wakes HiFi4 DSP and eIQ Neutron NPU when speech is detected

- 📉 NPU consumes 1/106th the energy of a Cortex-M33 core for typical CNN operations

💻 Hardware-Software Co-Design: The Secret Sauce

Achieving sub-10 mW operation isn’t just about silicon — it requires intimate hardware-software co-design.

🏥 University of Washington: NeuralAids Project

Combining a GreenWaves GAP9 AI accelerator with optimized dual-path neural networks and quantization-aware training:

- Processes 6 ms audio chunks in 5.54 ms

- Consumes 71.6 mW — highlights that further architectural innovation is still needed

⚡ Femtosense AI-ADAM-100: Leveraging Sparsity

This chip takes a different approach by leveraging sparsity — the presence of zeros in neural network matrices — to reduce computation:

- Arm Cortex-M0+ with Sparse Processing Unit NPU

- Eliminates operations on zero weights entirely

- Dramatically cuts power for voice processing tasks

🚀 The Road Ahead: Truly Intelligent Hearables

“The biggest challenge is to maintain high accuracy without impacting power. That’s why we don’t see many always-on solutions today. The trend is to bring more capabilities to low-power states, from sensor fusion to privacy-centric edge processing.”

— Mouna Elkhatib, CEO of AONDevices, CES 2025

With DSP + NPU co-design now delivering always-on intelligence under 10 mW — and in many cases under 1 mW — the next generation of hearables will:

- 🧠 Understand context

- 🔇 Suppress noise 👉 Recognize gestures 🔐 Authenticate users

All while lasting days on a charge. The era of truly intelligent earbuds has arrived.

📚 References

- Actions Technology. (2024). ATS323X Series: Tri-Core Heterogeneous SoC for Hearable AI. Product Brief.

- Alif Semiconductor. (2025). Balletto Family: BLE Wireless MCU with Integrated DSP and NPU. Technical Overview.

- AONDevices. (2025). AON1120: Ultra-Low Power Sensor Fusion IC for Always-On AI. CES 2025 Announcement.

- NXP Semiconductors. (2025). i.MX RT700: Domain-Specific AI Processor for Hearables and Smart Glasses. Product Documentation.

- University of Washington. (2025). NeuralAids: Real-Time Speech Enhancement on Battery-Constrained Hearing Aid Platforms. March 2025 Paper.

- Femtosense. (2025). AI-ADAM-100: Sparse Processing Unit for Voice AI. Technical Brief.