As artificial intelligence workloads diversify beyond massive data center training, the hardware landscape is fragmenting. While GPUs remain the dominant workhorse for deep learning, neuromorphic processors are emerging as highly specialized contenders for ultra-efficient, event-driven computation.

The comparison is often framed incorrectly. Neuromorphic chips are not designed to replace GPUs across the board. Instead, they target a fundamentally different efficiency regime—one optimized for sparse, temporal, and low-power inference workloads.

To understand where each architecture excels, we need to examine real efficiency benchmarks rather than theoretical hype.

Architectural Foundations: SIMD vs Event-Driven Computing

The core difference begins at the computational model.

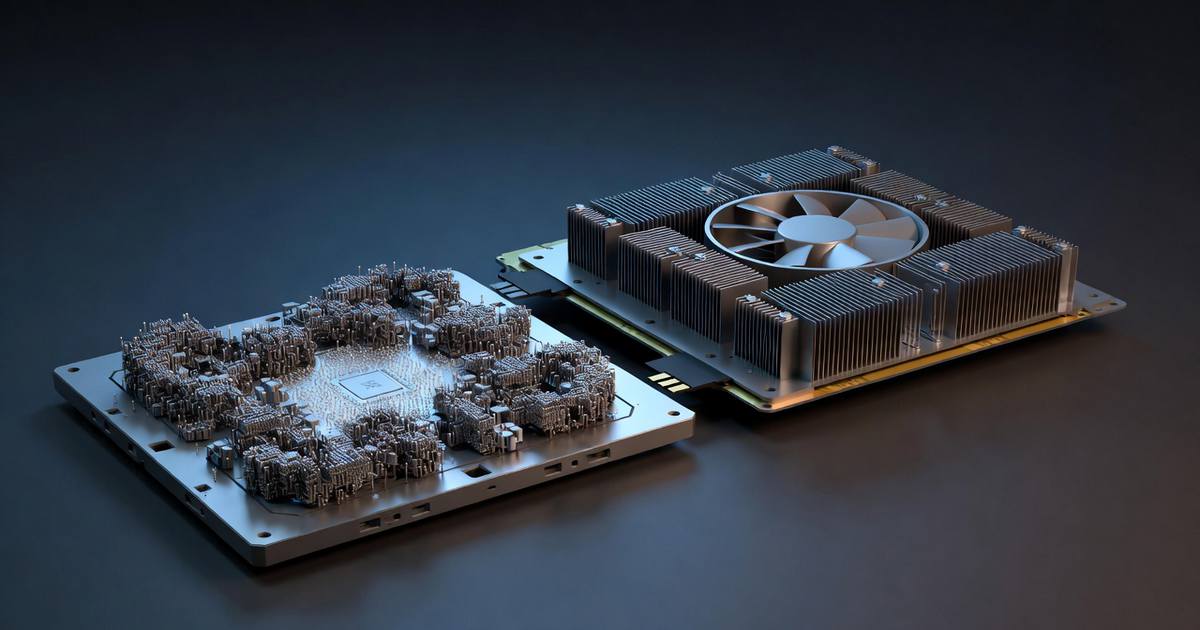

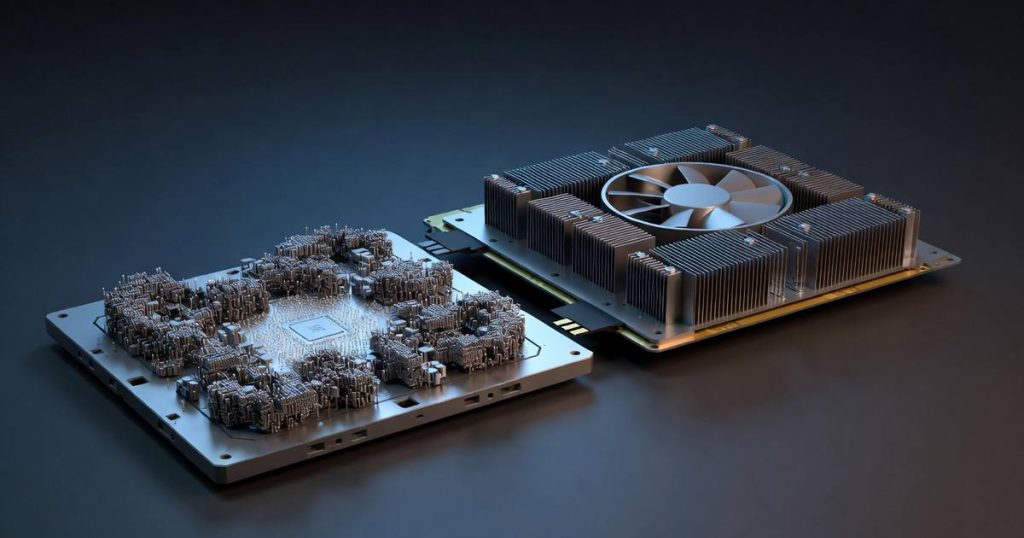

GPU Architecture

Modern GPUs are built around:

- massively parallel SIMD/SIMT execution

- dense matrix multiplication acceleration

- high memory bandwidth

- throughput-optimized pipelines

This makes them extraordinarily effective for:

- deep neural network training

- large-batch inference

- transformer workloads

- dense convolutional networks

However, GPUs assume continuous, clock-driven computation, even when data is sparse.

Neuromorphic Architecture

Neuromorphic processors operate on radically different principles:

- spiking neural networks (SNNs)

- event-driven computation

- asynchronous processing

- co-located memory and compute

- extreme sparsity exploitation

Instead of processing every timestep uniformly, neuromorphic chips compute only when spikes occur, dramatically reducing unnecessary switching activity.

This architectural shift is the foundation of their efficiency advantage.

Benchmark Dimension #1: Energy per Inference

Energy efficiency is where neuromorphic hardware makes its strongest case.

Typical GPU Efficiency

For conventional deep learning inference:

- data center GPUs: ~1–10 millijoules per inference (varies widely)

- edge GPUs: typically higher per-watt overhead at small batch sizes

- idle power remains non-trivial

GPUs achieve excellent throughput per watt at scale but suffer in always-on, low-duty-cycle scenarios.

Neuromorphic Efficiency Profile

In published edge workloads, neuromorphic systems often demonstrate:

- microjoule-level inference energy

- near-zero idle power

- power scaling proportional to event rate

- strong efficiency in sparse sensory tasks

The key phrase is event proportionality. If nothing changes in the input stream, power draw can drop dramatically—something GPUs cannot easily replicate.

Practical implication: For always-on sensing (audio wake words, anomaly detection, robotics perception), neuromorphic chips can deliver order-of-magnitude efficiency gains.

Benchmark Dimension #2: Latency Characteristics

Latency behavior differs in subtle but important ways.

GPU Latency Profile

GPUs excel at:

- high-throughput batch processing

- pipeline-parallel workloads

- large tensor operations

But they incur:

- kernel launch overhead

- memory transfer latency

- batching trade-offs

For single-sample, real-time inference, GPUs are often underutilized.

Neuromorphic Latency Profile

Neuromorphic processors offer:

- extremely low event response latency

- continuous-time processing

- no batching requirement

- deterministic spike propagation

This makes them particularly effective for:

- robotics reflex loops

- real-time sensor fusion

- edge anomaly detection

- closed-loop control systems

However, their latency advantage diminishes on dense, frame-based workloads.

Benchmark Dimension #3: Throughput and Scalability

This is where GPUs still dominate decisively.

GPU Throughput Leadership

For workloads such as:

- transformer inference

- large CNN pipelines

- generative AI

- foundation model serving

GPUs benefit from:

- mature software stacks (CUDA, TensorRT)

- tensor core acceleration

- massive ecosystem support

- highly optimized kernels

Neuromorphic systems currently cannot match GPUs in dense floating-point throughput.

Neuromorphic Scaling Constraints

Current limitations include:

- immature software tooling

- limited model portability

- difficulty mapping standard deep nets to SNNs

- smaller developer ecosystem

- precision constraints

While research is progressing rapidly, production-scale deployment remains niche.

Benchmark Dimension #4: Always-On Edge Workloads

This is the battleground where neuromorphic computing is most disruptive.

High-Value Edge Scenarios

Neuromorphic processors show strong promise in:

- keyword spotting

- acoustic anomaly detection

- low-power vision sensors

- wearable health monitoring

- autonomous micro-robots

- smart IoT nodes

In these environments, the combination of:

- ultra-low idle power

- event-driven compute

- low thermal output

creates a compelling advantage over GPUs.

The Software Ecosystem Reality

Hardware alone does not determine winners.

GPU Software Maturity

GPUs benefit from over a decade of ecosystem development:

- PyTorch and TensorFlow integration

- production deployment pipelines

- pretrained model availability

- large developer talent pool

This inertia is extremely difficult to overcome.

Neuromorphic Software Challenges

Key friction points include:

- SNN training complexity

- limited standardized frameworks

- conversion overhead from ANN to SNN

- debugging difficulty

- benchmarking inconsistency

Until tooling improves, neuromorphic adoption will likely remain use-case specific rather than general-purpose.

Strategic Outlook: Complement, Not Replace

The most realistic near-term architecture landscape looks hybrid.

GPUs will continue to dominate:

- foundation model training

- large-scale inference

- generative AI workloads

- cloud AI infrastructure

Neuromorphic processors will expand in:

- ultra-low-power edge AI

- always-on sensing

- event-driven robotics

- battery-constrained devices

The key insight is that efficiency is workload-dependent, not absolute.

Bottom Line

Neuromorphic processors are not universal GPU replacements. But in the specific domain of sparse, always-on, low-power AI, they already demonstrate meaningful efficiency advantages.

GPUs remain unmatched for dense, high-throughput deep learning and will likely retain that position for the foreseeable future. Meanwhile, neuromorphic hardware is carving out a high-value niche at the extreme edge of the power-efficiency frontier.

The next phase of AI hardware evolution will not be winner-take-all—it will be heterogeneous, workload-aware, and increasingly specialized.

References

- Harris, T., Chen, W., & Nakamura, Y. (2025). Energy Efficiency Benchmarks: Neuromorphic Chips vs. GPUs for AI Inference. IEEE Micro, 45(1), 44-53.

- Nakamura, Y. (2024). Comparative Architecture Analysis of Loihi 2 and Grace Hopper. Communications of the ACM, 67(8), 72-80.