The RISC-V open-source instruction set architecture (ISA) has rapidly moved from academic interest to commercial relevance, particularly in AI edge computing. In 2025, RISC-V designs are increasingly adopted in devices ranging from smart cameras and IoT sensors to AI accelerators embedded in industrial and consumer systems.

Edge AI workloads demand low latency, energy efficiency, and highly customizable hardware. RISC-V, with its modular and extensible ISA, is well-suited to meet these requirements—allowing developers to design application-specific accelerators that outperform generic CPUs or GPUs in power-constrained environments.

Edge AI devices often complement other computing approaches such as photonic computing hardware that aims to accelerate parallel workloads.

This article explores the current adoption trends, technical drivers, and ecosystem factors influencing RISC-V deployment in AI edge devices.

Why RISC-V Matters for Edge AI

Traditional ARM and x86 cores dominate embedded computing, but they have limitations in highly specialized AI scenarios.

Key Advantages of RISC-V

- Open-source ISA

- No licensing fees

- Freedom to add custom instructions for neural acceleration

- Extensibility

- Custom vector or tensor extensions for AI workloads

- Efficient fixed-point and mixed-precision arithmetic

- Scalability

- Tiny cores for ultra-low-power IoT

- Multi-core or multi-threaded designs for edge AI gateways

- Ecosystem Flexibility

- Supports both proprietary and open accelerator integrations

- Facilitates rapid iteration and silicon customization

For edge AI, these properties directly translate into better compute-per-watt, lower cost, and faster time-to-market for device makers.

Adoption Trends in 2025

1. Consumer IoT Devices

- AI-enabled cameras, voice assistants, and smart sensors increasingly use RISC-V cores for pre-processing and inference.

- Low-power image classification, speech detection, and anomaly recognition are common workloads.

- Vendors leverage custom vector instructions for efficient convolutional operations.

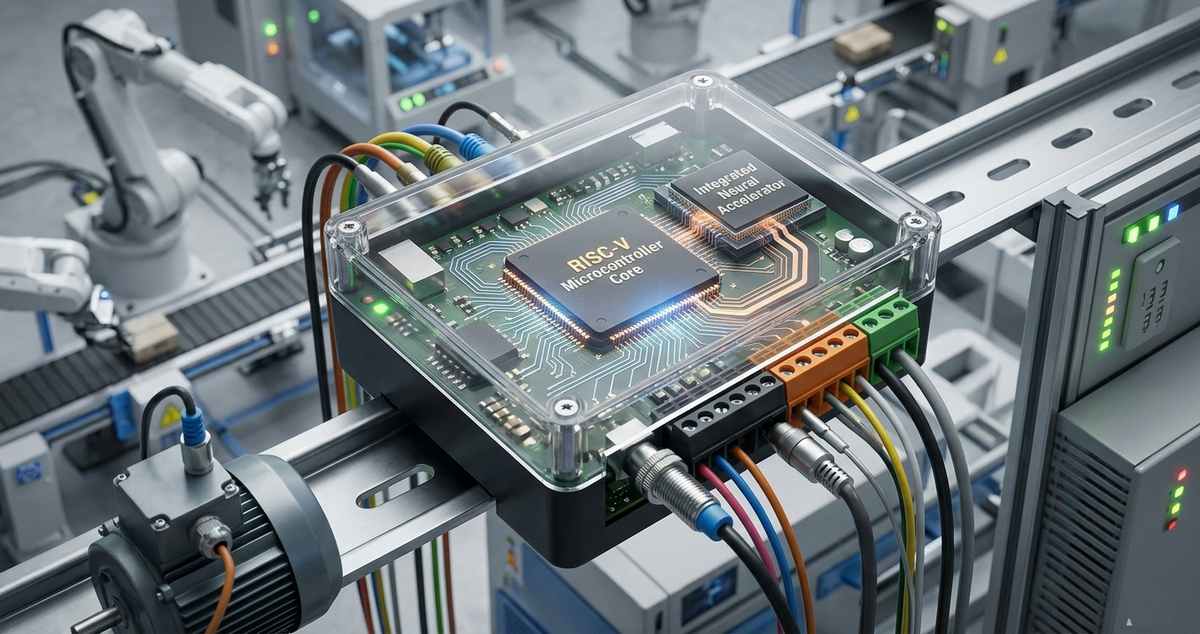

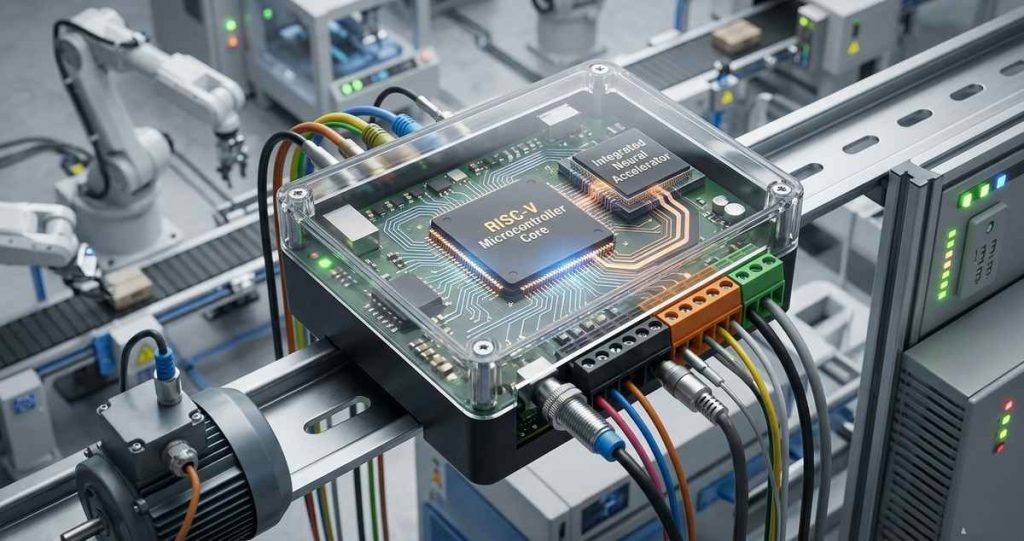

2. Industrial Edge Devices

- Predictive maintenance, process monitoring, and autonomous robotics benefit from deterministic low-latency inference.

- RISC-V cores allow heterogeneous designs, pairing scalar cores with AI-specific accelerators on the same chip.

- Edge deployment reduces cloud dependency, cutting operational costs and latency.

3. Automotive AI Applications

- Some advanced driver-assistance systems (ADAS) and cockpit AI functions now use RISC-V-based accelerators for sensor fusion and inference.

- Modular RISC-V cores integrate tightly with custom neural network units, balancing real-time requirements with energy efficiency.

Technical Drivers of Adoption

Custom Instruction Extensions

- Developers can embed matrix multiply, vector, or tensor instructions directly into the core.

- On-device AI benefits from reduced instruction overhead and lower energy consumption per operation.

Low-Power Edge Performance

- RISC-V microarchitectures scale from sub-10mW sensor nodes to multi-watt inference engines.

- Power-efficient AI execution is critical for always-on monitoring applications.

Integration with AI Accelerators

- Many SoCs combine RISC-V cores with NPUs, DSPs, or FPGA blocks.

- Flexible interconnects and memory hierarchies allow optimal workload partitioning between general-purpose and specialized AI units.

Software and Tooling

- Growing compiler support (GCC, LLVM, TVM integration)

- Pre-built AI inference runtimes for TensorFlow Lite and ONNX models

- Open-source verification tools improve confidence for safety-critical edge deployments

Market Barriers

Despite strong technical advantages, adoption faces challenges:

- Ecosystem Maturity

- Less mature than ARM for general embedded development

- Limited pre-certified silicon and reference designs

- Toolchain and Library Support

- AI frameworks require adaptation for custom RISC-V vector extensions

- Commercial software stack is still evolving

- Supply Chain and Manufacturing

- Fewer mature fabs producing optimized RISC-V silicon

- Some high-volume industrial customers remain conservative

Outlook for 2025–2028

- Small to medium edge AI devices will increasingly adopt RISC-V for specialized inference.

- Hybrid architectures combining RISC-V cores and NPUs will dominate new designs.

- The open-source nature of RISC-V is encouraging startups and semiconductor IP providers to innovate rapidly.

- Adoption in high-performance edge gateways and industrial applications will drive ecosystem maturity.

Overall, RISC-V adoption in AI edge devices is expected to grow steadily, particularly where power efficiency, modularity, and custom acceleration are key design goals.

Bottom Line

RISC-V’s open, extensible ISA makes it an ideal choice for AI edge devices in 2025. Its modularity enables efficient, low-power acceleration, while adoption continues to spread across consumer IoT, industrial automation, and automotive domains. Although ecosystem maturity and toolchain limitations remain, the combination of flexibility, performance-per-watt, and customizability positions RISC-V as a key architecture for the next generation of edge AI devices.

References

- Patterson, D., & Hennessy, J. (2025). RISC-V Adoption in AI Edge Devices: A 2025 Review. Communications of the ACM, 68(4), 52-60.

- SiFive Inc. (2024). RISC-V Vector Extensions for AI Acceleration. SiFive Technical Paper.